May 4, 2023

Tiny-ML for decentralized and energy-efficient AI

Artificial Intelligence (AI) is now part of our daily lives, embedded in connected wristbands and even smartphones. How is it possible to integrate such powerful algorithms into environments where every milliwatt counts? In this article, first published March 20, in French by ICT Journal Andrea Dunbar, Group Leader, Edge AI and Vision at CSEM and PhD candidate Simon Narduzzi present the advantages of TinyML to reduce the Cloud workload and tomorrow's challenges.

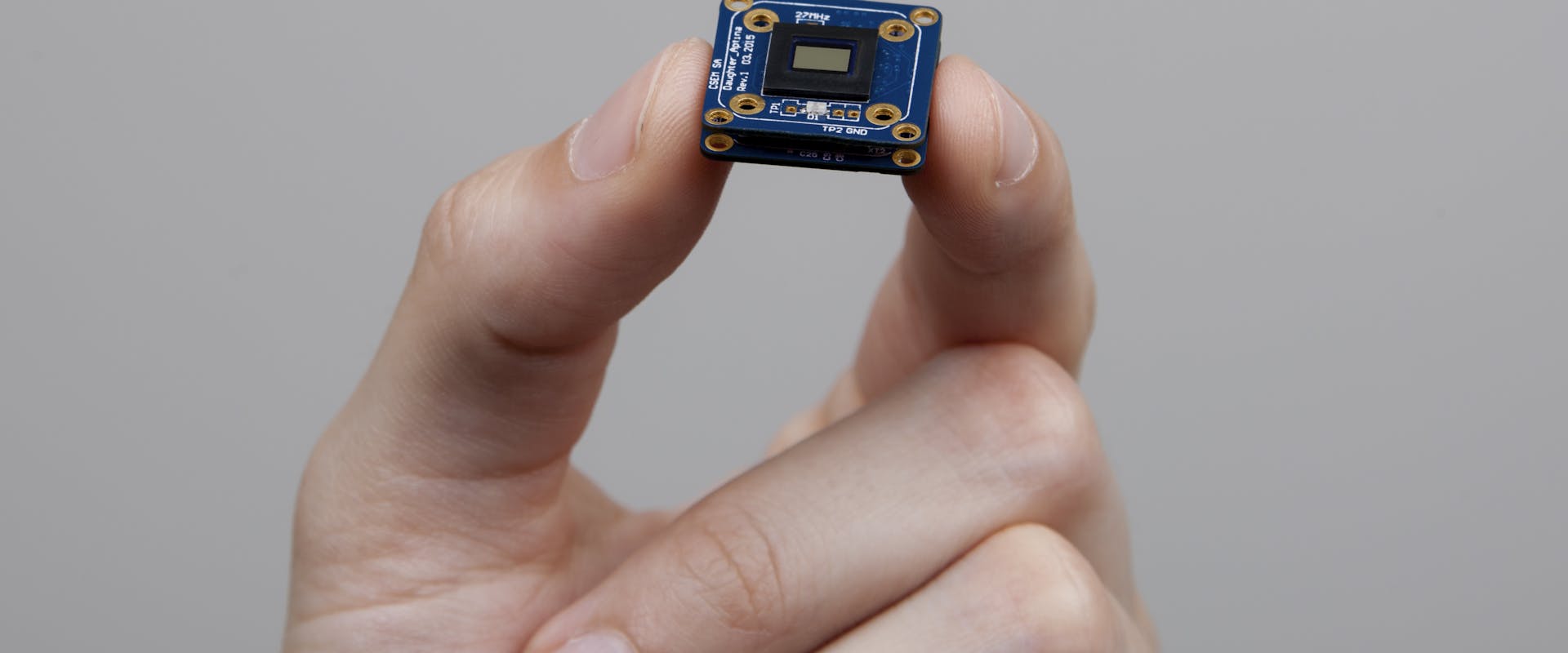

© CSEM - The Vision-in-Package (VIP) module is an effective, flexible computer vision device.